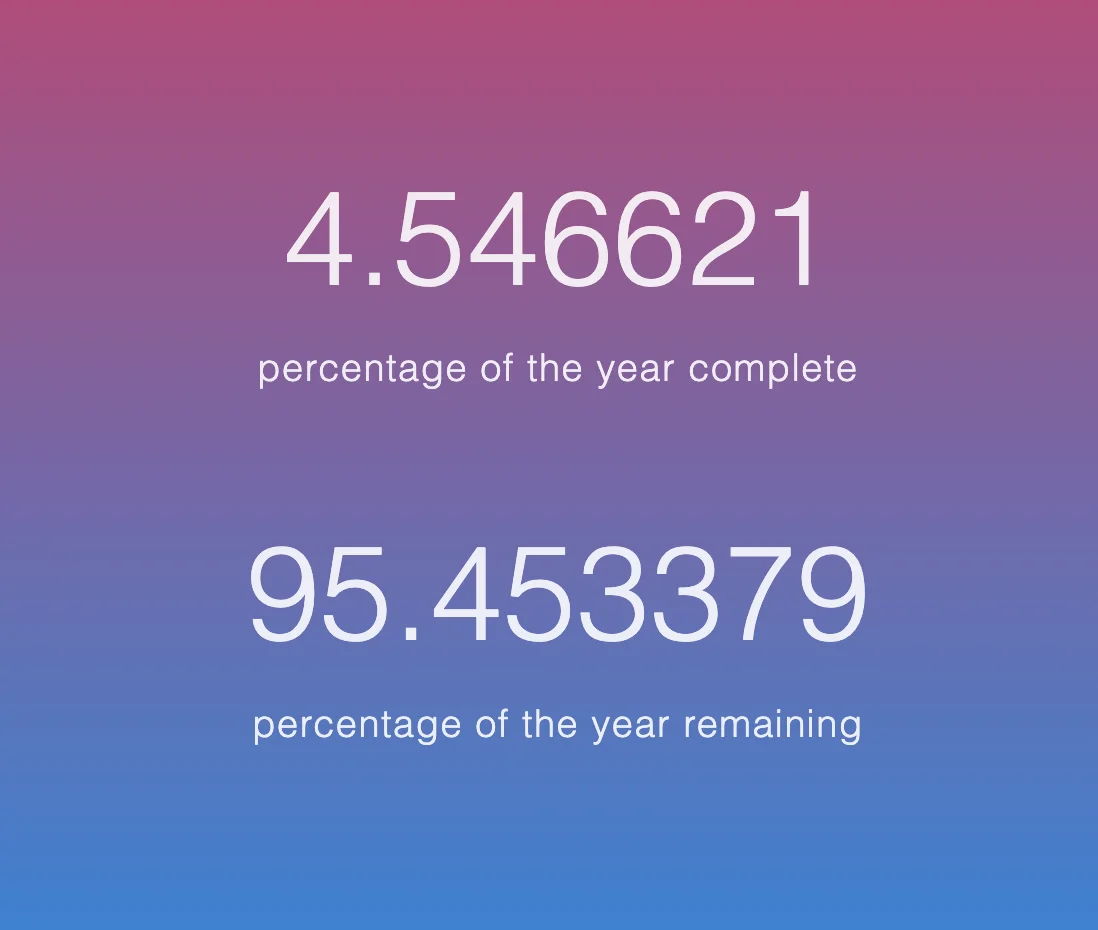

First 4% of this year has passed

It’s really surprising to see how fast time just passes by.

I am writing this note on Saturday, January 17th, 2026, at 2:00 PM. 4.5% of the year has passed at this moment I’m writing.

The primary goal for me this year was recording what I’ve been thinking of, I’ve done, and I’m like at the moment. That’s because, for the past few years, my life has been incredibly hectic with projects, work, research, and everything else. However, looking back, I feel like something is missing—kind of hollow and lost at the same time.

That is why I am trying to do things differently this year by tracking and recording all aspects of my life in any format available and as frequently as possible. Since this is something I have never done before, it has been difficult to stay engaged. Seventeen days have already passed, but I am determined to follow through. I am opening this note now to jot down what I have done over the past three weeks.

Project Aeolo

Aeolo | Generative Engine Optimization (GEO) PlatformSecure your brand visibility in AI search engines. Optimize your domain to be cited by ChatGPT, Perplexity, Gemini, and more.www.tryaeolo.com

Aeolo | Generative Engine Optimization (GEO) PlatformSecure your brand visibility in AI search engines. Optimize your domain to be cited by ChatGPT, Perplexity, Gemini, and more.www.tryaeolo.com

I am working on Project Aeolo, a B2B AI Software as a Service (SaaS) that provides customers with brand intelligence. This platform understands how a brand is represented within modern AI applications such as ChatGPT, Gemini, Perplexity, and Grok.

The service performs three main functions:

- Analyzes what needs to be improved

- Strategizes regarding those intelligence gaps

- Works to improve visibility by publishing articles optimized for the customers’ goals

This idea is not something new, but since starting my journey as a founder last year, I have felt that it is really hard to figure out what people want. For me - it felt like I’m just praying that people love my idea. I wanted something more—something that already has foundational thoughts and business models—so that I can simply improve upon it or perform better than the competitors.

This is mainly because I don’t think I understand what I truly pursue from this journey. This is what’s really unpleasant to accept but true. I felt like I have to be more sharp and focused on what I love to do. This is not a fast move and might not be an answer to being a good founder, but I just decided to slow down and improve step by step.

The reason why I’m doing this project is because it can actually help people, and there are customers out there. I’ve met several potential customers—both small and big—who are willing to optimize their AI visibility all over the world. It might give me some bucks (and maybe some bugs too). Anyway that’s why I’m trying to do this. I’m doing this all by myself, all alone. While this doesn’t necessarily make me a fast mover, I’m just trying to figure out how to do everything end-to-end by myself as a founder.

What I think is important to improve businesses’ AI visibility is that there must be an AI (or any kind of intelligence) that has an integrated understanding of what kind of product the business is selling and who the customer is. This is the most important part: the information must be fed to the AI as a whole set of knowledge, not fragmented, so that the AI can fully understand what the hidden gems are for the business to discover to increase their revenue. And as far as I know most of the businesses out there are not that deep in terms of AI integration. Hope my product get more competitive edge over and over as I keep shipping.

I hope I can meet a contract before January. But idk - will have to see.

Researching on VLA models

I’m starting an internship at SNU’s Physical Intelligence Lab and am currently finding a project to work on. For a few weeks, I’ve been reading papers regarding modern VLA findings, and there were some interesting ideas that I discovered. I just want to make a short note of them.

I always feel like I’m way behind the modern CS researches. This year I hope not, and understand foundational thoughts of those recent magics that are changing the whole world. So I’ve been following up recent studies this month - some are so basic such as representing GPT-2, flow-matching basics, .. and so on. It might be better to have something more original to be noted.

Intelligence = compression?

Found this quite old theory that explains how AR models suddenly got “emergent” capabilities of being intelligent. Many people have written impressive articles on this topic, and was interesting to delve in.

https://lewish.io/posts/intelligence-and-compression

https://x.com/DrJimFan/status/1691850114577711499?s=20

online learning vs offline learning

Fine-Tuning Vision-Language-Action Models: Optimizing Speed and SuccessRecent vision-language-action models (VLAs) build upon pretrained vision-language models and leverage diverse robot datasets to demonstrate strong task execution, language following ability, and semantic generalization. Despite these successes, VLAs struggle with novel robot setups and require fine-tuning to achieve good performance, yet how to most effectively fine-tune them is unclear given many possible strategies. In this work, we study key VLA adaptation design choices such as different action decoding schemes, action representations, and learning objectives for fine-tuning, using OpenVLA as our representative base model. Our empirical analysis informs an Optimized Fine-Tuning (OFT) recipe that integrates parallel decoding, action chunking, a continuous action representation, and a simple L1 regression-based learning objective to altogether improve inference efficiency, policy performance, and flexibility in the model's input-output specifications. We propose OpenVLA-OFT, an instantiation of this recipe, which sets a new state of the art on the LIBERO simulation benchmark, significantly boosting OpenVLA's average success rate across four task suites from 76.5% to 97.1% while increasing action generation throughput by 26$\times$. In real-world evaluations, our fine-tuning recipe enables OpenVLA to successfully execute dexterous, high-frequency control tasks on a bimanual ALOHA robot and outperform other VLAs ($π_0$ and RDT-1B) fine-tuned using their default recipes, as well as strong imitation learning policies trained from scratch (Diffusion Policy and ACT) by up to 15% (absolute) in average success rate. We release code for OFT and pretrained model checkpoints at https://openvla-oft.github.io/.arXiv.org

Fine-Tuning Vision-Language-Action Models: Optimizing Speed and SuccessRecent vision-language-action models (VLAs) build upon pretrained vision-language models and leverage diverse robot datasets to demonstrate strong task execution, language following ability, and semantic generalization. Despite these successes, VLAs struggle with novel robot setups and require fine-tuning to achieve good performance, yet how to most effectively fine-tune them is unclear given many possible strategies. In this work, we study key VLA adaptation design choices such as different action decoding schemes, action representations, and learning objectives for fine-tuning, using OpenVLA as our representative base model. Our empirical analysis informs an Optimized Fine-Tuning (OFT) recipe that integrates parallel decoding, action chunking, a continuous action representation, and a simple L1 regression-based learning objective to altogether improve inference efficiency, policy performance, and flexibility in the model's input-output specifications. We propose OpenVLA-OFT, an instantiation of this recipe, which sets a new state of the art on the LIBERO simulation benchmark, significantly boosting OpenVLA's average success rate across four task suites from 76.5% to 97.1% while increasing action generation throughput by 26$\times$. In real-world evaluations, our fine-tuning recipe enables OpenVLA to successfully execute dexterous, high-frequency control tasks on a bimanual ALOHA robot and outperform other VLAs ($π_0$ and RDT-1B) fine-tuned using their default recipes, as well as strong imitation learning policies trained from scratch (Diffusion Policy and ACT) by up to 15% (absolute) in average success rate. We release code for OFT and pretrained model checkpoints at https://openvla-oft.github.io/.arXiv.org

Been following up VLA models; from traditional e2e to recent RTC based models.

This paper notes that even trained with the exact same data - offline training was not enough as they lack to “behave” as good as they perceive. Interesting.

Random thoughts

- https://www.youtube.com/watch?v=orQKfIXMiA8&pp=ygUZaW1wb3J0YW5jZSBvZiBiZWluZyBib3JlZA%3D%3D

- Everyone is so dopaminated. The detoxified life the speaker is saying hits different.

- Proximity theory

- couldn’t find where I saw it but there was a post in X, a man saying that he just hung out with people that he think was cool, and it led him to be successful afterwards.

- recent days as I am building and reading all by myself - it gets me desperate on meeting someone who’s truly inspiring. hope I ran into some very soon.